Github repo: http://github.com/MandarGogate/Association-Rule-Mining-Hadoop-Python

A project by: Mandar Gogate, Abhishek Srivastava and Aman Ahuja

Introduction

In this work, we demonstrate association rule mining on the Hadoop distributed environment. We implement the FPtree association rule mining algorithm on Hadoop mapreduce framework to demonstrate data mining on distributed systems. As a case study, we use this algorithm to extract association rules from the USA deaths dataset, to determine the relationships between different factors related to deaths of the people in the United States.

A case study on mining association rules between different factors related to deaths of people in the United States

Background and Motivation

Association rule mining is the method for discovering association rules between various parameters in the dataset. This is widely useful in systems such as ecommerce and supermarkets, where the association between the purchases of different products by the customers can be useful in marketing.

The process of mining association rules mainly involves the following two steps:

- A minimum support threshold is applied to find all frequent itemsets in a database.

- A minimum confidence constraint is applied to these frequent itemsets in order to form rules.

Apriori Algorithm

One of the most widely used algorithms in the field of association rule mining is the Apriori algorithm, which uses a breadthfirst strategy to discover association rules between the parameters. It proceeds by identifying the frequent individual items in the database and extending them to larger and larger item sets as long as those item sets appear sufficiently often in the database. One of the key limitations of this algorithm is costly wasting of time to hold a vast number of candidate sets with much frequent item sets, low minimum support or large itemsets.

FPTree

The Frequent Pattern (FP) Tree growth algorithm overcomes the disadvantages of Apriori algorithm by limiting the number of database scans needs for association rule discovery. The number of database scans needed in FPTree algorithm is reduced to 2.

In the first scan, it counts the number of occurrences of each item in the dataset to find frequent 1itemsets. These counts are then sorted in the decreasing order, so that they can be used for the construction of FPtree in the second scan of the database. Items in each instance that do not meet minimum coverage threshold are discarded.

After the FPtree is constructed, it then recursively grows the patterns by traversing the FPtree.

Parallelizing FPtree growth algorithm:

In large datasets, constructing FPtree can be cumbersome and timeconsuming. To efficiently mine association rules on large datasets, we need to use distributed computing platforms so that the process can be simultaneously split across multiple processing nodes.

We implemented the FPtree algorithm on Hadoop mapreduce platform to efficiently mine association rules on a large dataset. The overall process is divided into two mapreduce phases. These two mapreduce phases divide the entire algorithm implementation into, intuitively, frequent pattern set generation and rule generation. Next we give an elaborate description of the process.

Map-reduce 1

Map: In the map step of the first mapreduce phase, we construct an FPtree on the datanodes for each exclusive set of 10,000 transactions, generated in a sequential manner,i.e the first 10000 form the first set, next 10000 the second set and so on. We use a local threshold, so that redundant itemsets are removed early on which optimizes the process further, reducing the following reducer’s work in the process.

Just the give a brief idea regarding the implenetation of the mapper, we create an FPTree class maintaining a header and a mapper table in the process, for in order indexing of the attributes. During the tree construction, the longest common prefix is found for each transaction and thus added to it. Kindly note that FpGrowth is the method actually responsible for each transaction addition to the tree.

Reduce: The reducer serves to combine the partial frequency counts of patterns and writes the summations out to the Hadoop file systems.

The next mapreduce does class association rule mining on the derived frequent item sets from the first phase of the algorithm implementation.

Map-reduce 2

Map: This mapper generates all sub patterns for each given frequent item set, by removing only one element. The sets now formed become the base for the rule mining process executed by the following Reducer.

Reduce: The second phase reducer combines the patterns passed on by the aforementioned mapper, generating one antecedent rules with confidence and support greater than the specified threshold.

These rules are then processed to extract maximum semantic meaning with respect to the dataset.

Dataset

To demonstrate our work, we use the USA deaths dataset (http://www.kaggle.com/cdc/mortality) , which contains information about every death that occurred in the United States in the year 2014. These details include information about the causes of the death, and the demographic background of the deceased. The original dataset contains details about the death of nearly 2.6 million people who died in the year 2014. Each record contains 38 attributes related to the deceased.

Data Preprocessing

We preprocessed the dataset, so that the redundant attributes were removed, and only the important attributes were left for each record. In total, 11 attributes were dropped, and the remaining 17 attributes were used in the association rule mining process.

These were:

● Resident status (state resident or not)

● Education (years of education using 2003 code)

● Month of death

● Sex (male/female)

● Age (divided in 12 bins)

● Marital status

● Day of week of death

● Injury at work

● Manner of death (accident/suicide/natural/disease)

● Method of disposition (burial/cremation)

● Autopsy performed (yes/no)

● Activity Code (While engaged in sports activity / While engaged in leisure activity)

● Place of Injury (Home/Institution)

● Type of disease

● Race (white/black)

● Bridged race

● Hispanic Origin

After filtering out the attributes, we then discretized all the attribute values. For example, the resident status of 0 or 1 was converted to A0 and A1 respectively for the rule mining process.

The dataset size was reduced from 270 MB to 156 MB after the preprocessing.

Experimental Results: Quantitative Results:

We now present the quantitative results of the experiments conducted the discussed dataset. The time required to for the algorithm to run on a single cluster Hadoop machine was nearly 2 hours. This was significantly reduced to nearly 50 mins, when the algorithm was run on multicluster setup consisting of 3 machines.

Visualisation of Results:

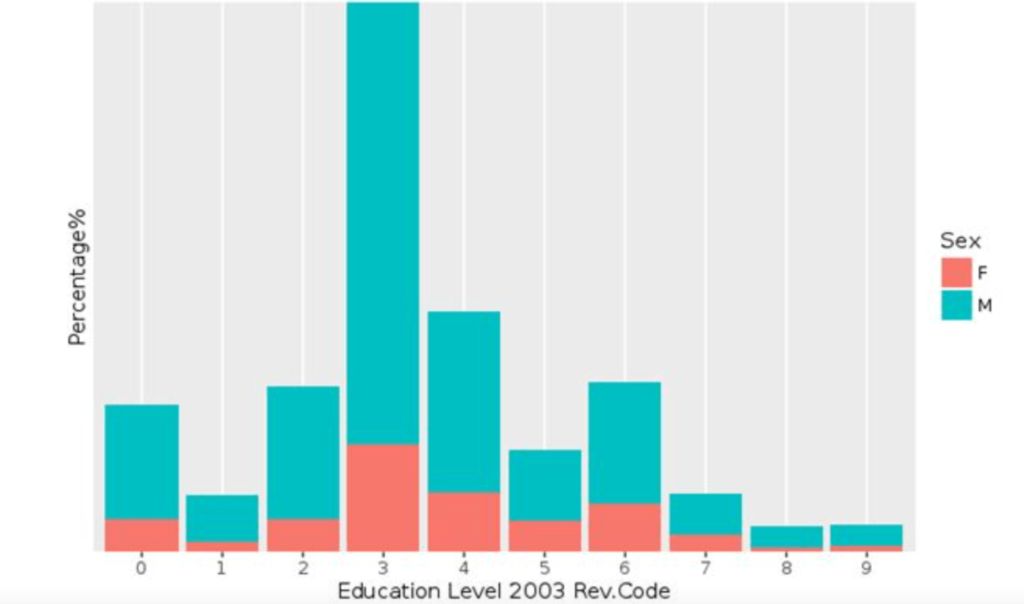

Percentage of suicide cases as per education level

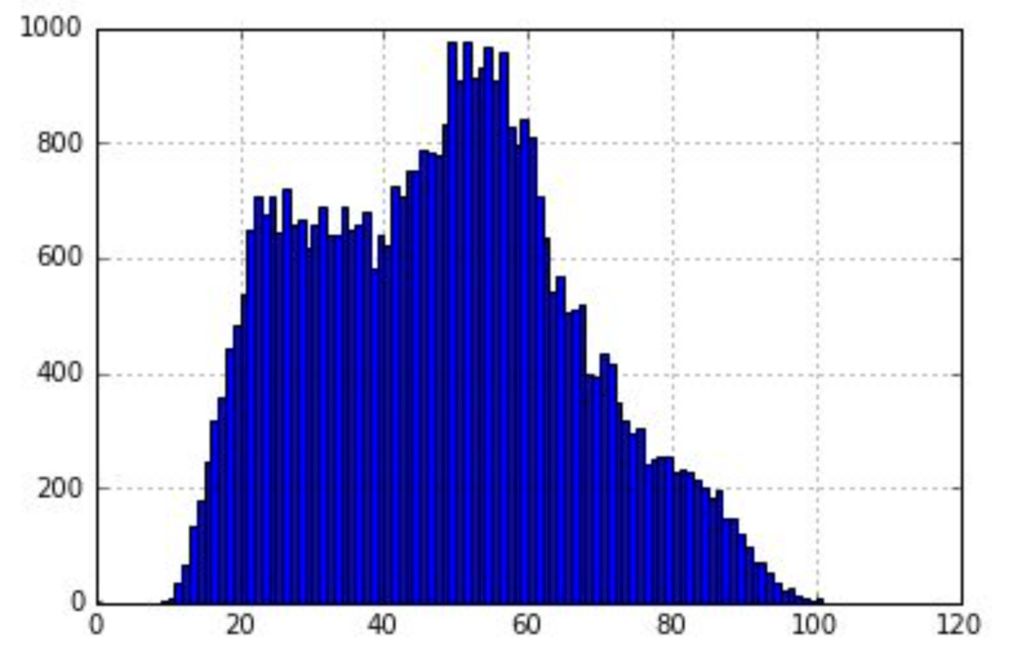

Suicides by age

Ordered causes of deaths

- Atherosclerotic heart disease

- Malignant neoplasm: Bronchus or lung

- Unspecified dementia

- Acute myocardial infarction

- Chronic obstructive pulmonary disease

- Alzheimer disease

- Stroke, not specified as haemorrhage or infarction

- Atherosclerotic cardiovascular disease

- Congestive heart failure